Principles of Mixed Reality Permissions

Virtual and Augmented Reality (VR and AR) — known together as Mixed Reality (MR) — introduce a new dimension of physicality to current web security and privacy concerns. Problems that are already difficult on the 2D web, like permissions and clickjacking, become even more complex when users are immersed in a 3D experience. This is especially true on head-worn displays, where there is no analogous concept to the 2D “window,” and everything a user sees might be rendered by the web application. Compounding the difficulty of obtaining permission is the more intimate nature of the data collected by the additional sensors required to enable AR and VR experiences.

To enable immersive MR experiences, devices have sensors that not only capture information about the physical world around the user (far beyond the sensors common on mobile phones), but also capture personal details about the user and (possibly) bystanders. For example, these sensors could create detailed 3D maps of the physical world (either by using underlying platform capabilities like the ability to intersect 3D rays with a model of world around the user, or by direct camera access), infer biometric data like height and gait, and potentially find and recognize nearby faces in the numerous cameras typically present on these devices. The infrared sensor that detects when a head-mounted device is worn could eventually disclose more detailed biometrics like perspiration and pulse rate, and some devices already incorporate eye-tracking.

For each sensor, there are straightforward uses in MR applications. A model of the world allows devices to place content on surfaces, hide content under tables, or warn users if they’re about to walk into a wall in VR. A user’s height and gait are revealed by the precise 3D motion of their head and hands in the world, information that is essential for rendering content from the correct position in an immersive experience. Eye-tracking can support natural interaction and allow disabled people to navigate using just their eyes. Access to camera data allows applications to detect and track objects in the world, like equipment being repaired or props being used in a game.

Unfortunately, there are concerns associated with each sensor—a data leak involving users’ home data could violate their right against unreasonable search; height and gait can be used as unique personal identifiers; a malicious application could use biometric data like pupil tracking and perspiration to infer users’ political or sexual preferences or track the location of bystanders who have not given consent and may not even be aware they are being seen. This is particularly worrying when governments may have access to this data.

At Mozilla, our mission is empowering people on the internet. The web is an integral part of modern life, and individual security and privacy are fundamental rights. When there are potential negative consequences, browsers typically request consent. However, as we collect and pass more data over the internet, we’ve fallen behind on ensuring users give informed consent. This trend could have far-reaching impact on users as more and more of their interactions move onto MR devices.

Informed Consent

The idea of informed consent originates in medical ethics and the idea that individuals have the right to exercise control over critical aspects of their lives. The internet is now a fundamental piece of people’s lives and society in general, and at Mozilla we strongly believe that informed consent is a right on the internet as well. Unfortunately, providing informed consent for internet users suffers from similar issues as informed consent in medicine, where users may not understand what they are being told and may not be motivated to consider their choices in the moment. Most importantly, the immersive web must have a foundation of trust to start from.

Obtaining informed consent requires disclosure, comprehension, and voluntariness. In order to be informed, people must have all necessary information, presented in a way they understand; in this context, that includes the data being collected or transmitted and the risks of unauthorized disclosure. To be able to consent, a person must not only be able to understand the disclosed information, but also be able to make a decision free of coercion or manipulation.

Completely and accurately presenting the information required for informed consent is challenging. Permissions have already become too complex to easily communicate to users what data is gathered and the potential consequences of its use or misuse. For example, PokémonGo uses access to the accelerometer and gyroscope in the the phone to align the Pokémon with the player’s orientation in the world and determine if they might be driving (i.e. they shouldn’t be playing the game). However, it can also be used by a bad actor to recover your password. These more subtle risks may be linked to more severe consequences.

Interactions between multiple sensors presents an additional permissions challenge—what happens when we combine accelerometer data with biometric data and microphone access? What happens if we add camera access? Individually, these sensors have complex threats; taken together, it is difficult to convey the full breadth of possible risks without sounding hyperbolic.

Given the new challenges of the immersive web, we have an opportunity to rework how we approach permissions and consent to better empower people. While we don’t yet understand what to tell users, we propose four principles as the basis for approaching this problem: permissions should be progressive, accountable, comfortable, expressive (PACE).

Principles

Progressive

The idea of progressive web applications is well understood in the web community, referring to the design of websites that work on a variety of devices and take advantage of the capabilities of each, creating progressively more capable sites as the capabilities of the device better match their needs. In Mixed Reality, the capabilities of devices are much more varied, requiring more dramatic changes to sites that want to support as many people as possible. Beyond just device capabilities, the intimate (even invasive) nature of AR sensing means that users may not want to grant the full capabilities of their device to all websites.

To both support a diversity of devices and respect user privacy, browsers need to embrace the idea of progressive permissions—giving people better controls over permission granting—by providing context for sensor access and enhancing the capabilities granted to websites gradually. This principle is closely related to the concept of informed consent; by requesting dangerous permissions out of context, sites risk providing incomplete disclosure and impacting comprehensibility. For example, most applications and sites request all necessary permissions at install or startup, then persist those permissions indefinitely.

The idea of providing context to permissions is not new; some mobile apps and websites already present people with an explanations of the permissions it will request at startup, providing a description of why access is required. Users can then select and approve/deny each permission at this point. If the user later accesses a feature that required a denied permission, then the application could re-present the request.

Part of “progressivity” is responsibly collecting data only the when needed and not persisting sensor use when not necessary. A person who has accepted microphone access to allow verbal input has not accepted unfettered microphone access for eavesdropping.

Therefore, progressive permissions should also be bidirectional, allowing users to turn permissions on and off repeatedly throughout the lifetime of a web app. In this example, a user might reasonably expect a site to use the microphone during input, and then stop using the sensor when input is complete—even if it still has permission to use it.

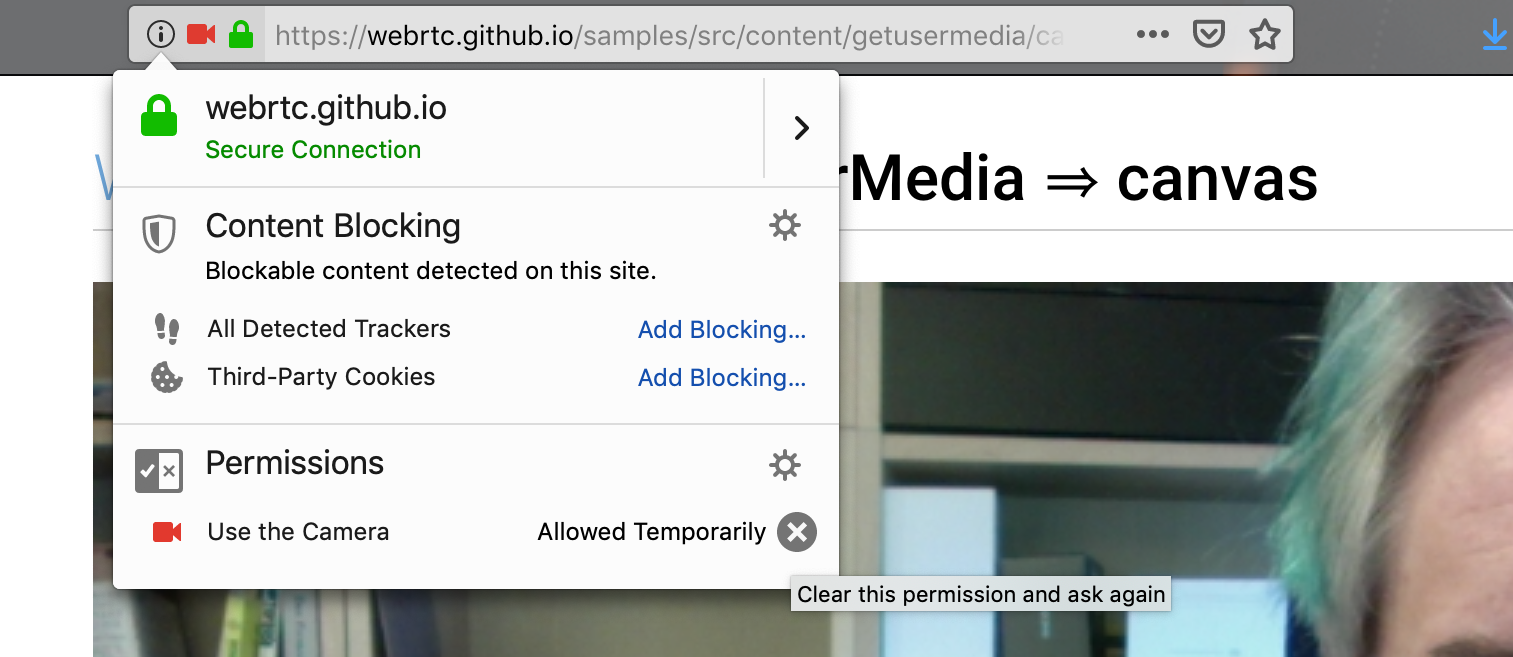

Also consider an application that requests camera access. At home, I grant it. At work, I open the application and it immediately uses the camera, compromising confidential information. We don’t want to keep prompting, but want the user to be aware of, and have control over, when sensor data is available to the application, changing permissions as they desire, depending on their preferences, context and needs (in contrast to current permissions, such as the camera permissions in the figure below). This principle is mutually reinforced by accountability.

Accountable

Accountability pertains to what happens after a permission is granted. All active or granted permissions should be easy to inspect and easy to change. We envision a user interface that is simple to access that lists:

- current permissions

- when each permission was approved/denied

- data currently collected/monitored by the page

- a toggle that allows easy switching between approval/denial of each permission (without requiring page reload)

Revocation should be straightforward, and only impact related features (revoking camera access should only affect features that require the camera, not prevent use of the entire site).

Additionally, when a website uses device resources, such as accessing files, there should be a method to hold the site accountable for resources accessed and/or modified. As browsers adopt new architectures to improve security through techniques like site isolation, identifying which pages are using which resources becomes easier, allowing browsers to report more accurate and granular usage data to users.

Examples of browsers continuing to execute JavaScript even after the browser is closed or the screen is turned off are troubling and violate accountability expectations. Some sensors, including motion and light sensors, aren’t protected by permissions and are exposed to JavaScript. These sensors also represent potential side channels for retrieving sensitive data and should be considered when designing accountability measures.

Comfortable

Users already report fatigue about excessive permissions requests. Embracing progressivity and accountability without taking this fatigue into account runs the risk disrespecting users’ attention and increasing this fatigue. Therefore permissions must also be comfortable. When we talk about permissions being comfortable, we’re explicitly referring to this need to balance user control with reduced friction. Interrupting users’ tasks, asking for permission at the wrong times, and excessive permissions requests can lead people to “give up” and automatically accept permissions to “get on with it.”

As we increase the amount and variety of information being sensed, we should consider alternatives to simple permission dialogs. For example, in some cases, browsers could use implicit permissions based on user action (e.g., pressing a button to take a picture might implicitly give camera access). In 3D immersive MR, where the user is using a head-worn display, permission requests that are presented in the immersive environment should provide a comfortable UX that is easily identified as being presented by the browser (as opposed to the page). If requests are jarring or visually uncomfortable, users may not take their time and consider them, but quickly accept (or dismiss) them to return to the immersive experience. Over time, we hope the web community will develop a consistent design language for various permissions across multiple browsers and environments.

Approaches to comfort can build on the previous principles: implicitly granting one kind of permission can be balanced by maintaining accountability and visibility of what data the site has access to, and by providing a simple and obvious way to examine and modify permissions.

Expressive

Expressiveness relates to the browser handling different permissions for different sensors differently, instead of assuming one size fits all (i.e., presenting a similar sort of prompt for any capability that needs user permission). The current permissions approach divides sensors into two categories: dangerous (requiring a prompt) and not (generally accessible without additional user input). Unfortunately, interactions between “not-dangerous” sensors, like the accelerometer and the touch screen used for input, can leak data like passwords (by watching the motion of the device when the user types)[1]. In an immersive context, devices have considerably more powerful sensors, resulting in more complex and difficult to predict interactions.

A possible solution to more expressive permissions is permission bundling, grouping related permissions together. However, this risks violating user expectations and could result in a less progressive approach.

Entering immersive mode will automatically require activating certain sensors; for example, a basic VR application will use motion sensors for rendering and be given an estimate of where the floor is so it can avoid placing virtual objects below the floor; from these, an application will be able to infer your height . These sorts of secondary inferences are not always so obvious. Even in a small study of 5 users, three participants believed that the only data collected by their VR device was either data they provided when creating an account or basic usage data (such as how frequently they use an application). Only two participants were aware that the device sensors collected and transmitted much more data. The richer the application, the more likely one or more of the sensors involved will be transmitting data that can be used to uniquely identify individuals.

One of these three participants explicitly stated that their VR system, an Oculus Rift, could not collect audio data.

Looking Forward

Accurately and completely explaining the data that’s being collected and potential consequences is central to acquiring informed consent, but there’s a danger that permissions prompts will become opaque legal waivers. As we add more sensors to devices and collect more personal and environmental data, it’s tempting to simply add more permission prompts. However, permission fatigue is already a serious issue.

When possible, we should identify opportunities for implicit consent. For example, you don’t have to give permission every time you move or click a mouse on the 2D web. When we do require explicit consent, platforms should provide a comfortable and consistent user experience.

The goal of permissions should be to obtain informed consent. In addition to designing technical solutions, we need to educate the public about the types of data collected by devices and potential consequences. While this should be required for making informed choices about permissions, it’s not sufficient. We need to combine the three aspects of informed consent (disclosure, comprehension, voluntariness) with the four PACE principles (progressive, accountable, comfortable, expressive) to provide an immersive web experience that empowers people to take control of their privacy on the internet.

The strength of the web is the ability for people to casually and ephemerally browse pages and follow links while knowing that their browser makes this activity safe—this is the foundation of trust in the web. This foundation becomes even more important in the immersive web due to the potential new pathways for abuse of the rich, intimate data available from these devices.

Current events demonstrate the dangers of rampant data collection and misuse of personal data on the web; mixed reality devices, and the new kinds of data they generate present an opportunity to change the conversation about permissions and consent on the web.

We propose the PACE principles to encourage MR enthusiasts and privacy researchers to consider new approaches to data collection that will inform and empower users while respecting their time and energy. These solutions will not all be technical, but will likely include education, advocacy, and design leadership. As VR and AR devices enter the mainstream tech environment, we should proactively explore the viability of new directions, rather than waiting and reacting to the greater damage that might come from future data breaches and abuse.

in this specific case, and for this reason, the devicemotion API has been deprecated in favor of a new sensor API ↩︎