Behind-the-Scenes at Hubs Hack Week

Earlier this month, the Hubs team spent a week working on an internal hackathon. We figured that the start of a new year is a great time to get our roadmap in order, do some investigations about possible new features to explore this year, and bring in some fresh perspectives on what we could accomplish. Plus, we figured that it wouldn’t hurt to have a little fun doing it! Our first hack week was a huge success, and today we’re sharing what we worked on this month so you can get a “behind the scenes” peek at what it’s like to work on Hubs.

Try on a new look

As part of our work on Hubs, we think a lot about expression and identity. From the beginning, we've made it a priority to allow creators to develop their own avatar styles, which is why you might find yourself in a Hubs room with robots, humans, parrots, carrots, and everything in between.

We don’t make assumptions about how you want to look on a given day with a specific group of people. That's why when an artist on the team built a series of components for a modular avatar system, we built a standalone editor instead of integrating one directly into Hubs itself.

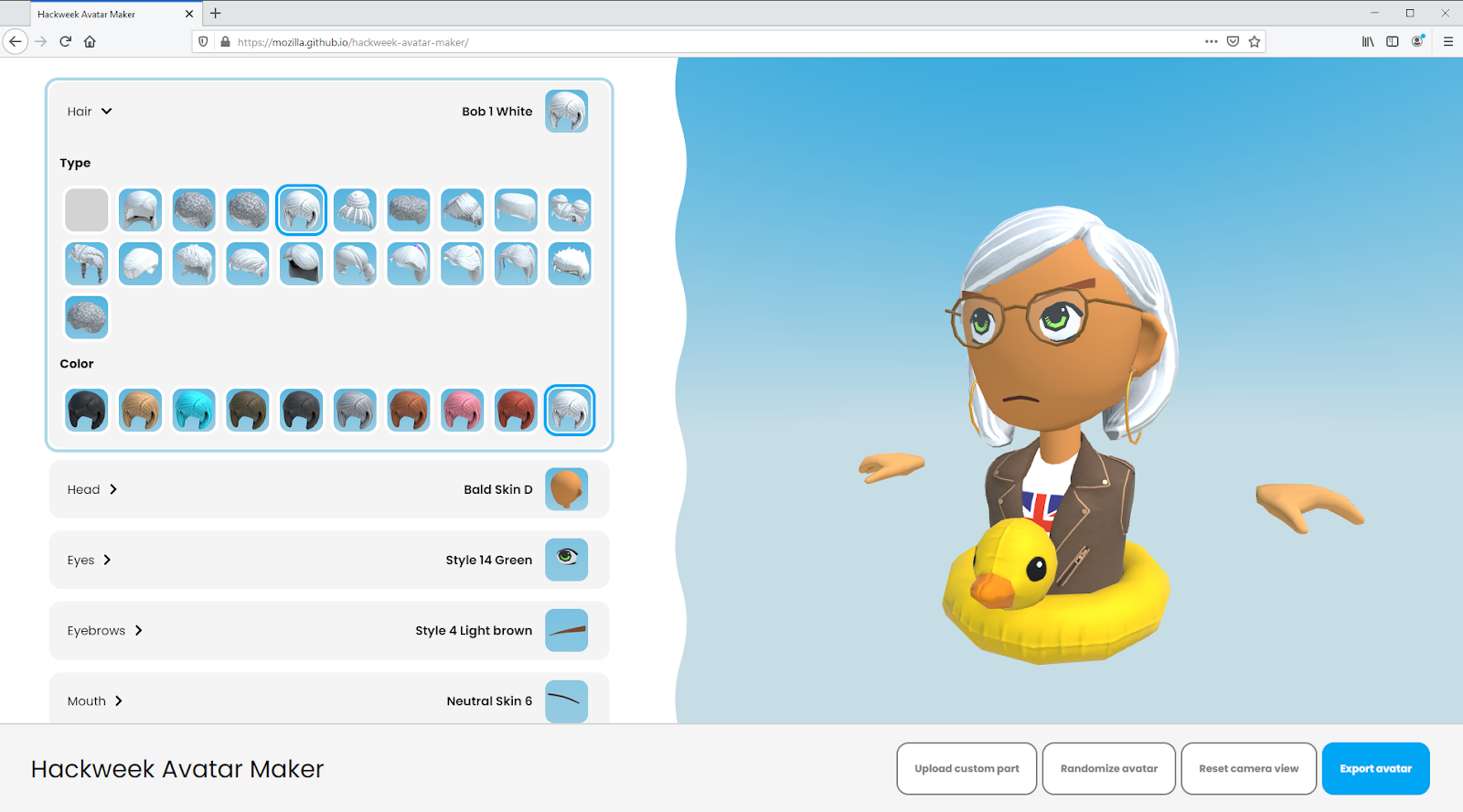

Over the past year, we’ve been delighted to see avatar editors popping up for Hubs, like Rhiannan’s editor and Ready Player Me. For hack week, we added one more to the collection for you the community to play with, tinker on, and modify to your liking!

To get started, head to the hack week avatar maker website. The avatar you see when you first arrive is made out of a random combination of kit components. Use the drop down menus on the left hand side of the screen to pick your favorite features and accessories.

To import your avatar into Hubs, click the “Export Avatar” button to save it to your local computer, and follow these steps to upload it into Hubs.

Can you see me now? Experimenting with video feeds in Hubs

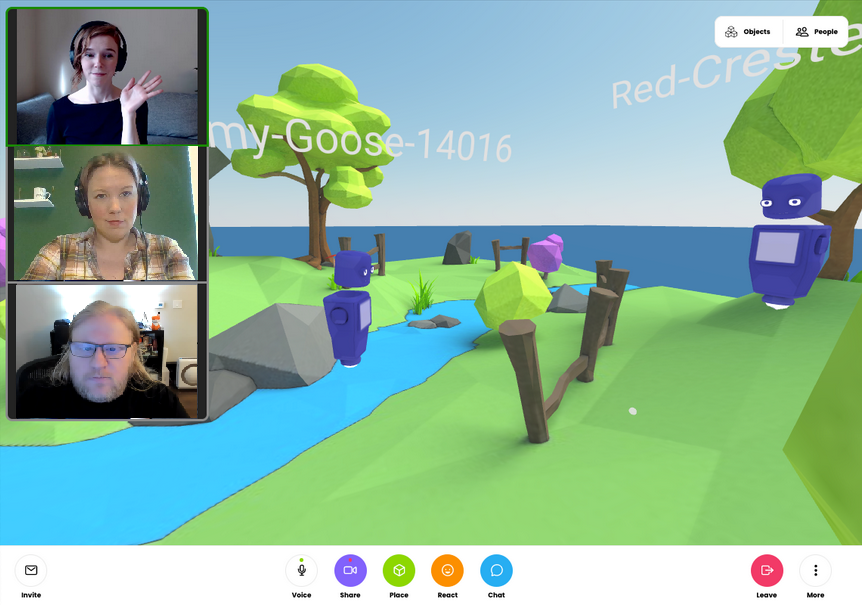

Social distancing can be tough! While Hubs is built for avatar-based communication, sometimes it’s nice to see a friendly face. We’ve gotten lots of feedback from community members asking for new ways to share their webcams in Hubs. We took that feedback to heart, and set off to see what we could do.

Our team philosophy tends to fall on the side of giving people different options, so we took on two different projects: one that would explore having camera feeds as part of the 2D user interface, and one that put them onto avatars.

Avatar-based chat apps can be a lot to take in if you’re new to 3D, so we experimented with a video feed layer that would sit on top of the Hubs world. While this is still just in a prototype stage, there’s a lot of potential here. We’re looking at doing a deeper dive into this type of feature later in the year when we can devote some time to figuring out how this could tie into the spatial audio in Hubs and our upcoming explorations into navigation in general.

While we’re probably still a ways off from having true holograms, we did figure out that we could get some virtual holograms in a Hubs room using some new billboard techniques and a couple of filters. These video avatars allow you to use your webcam to represent you in a Hubs space, and shares your video with the room such that it sticks to you as you move. When you’re wearing a video avatar, a new option will appear to share your webcam onto the video texture component specified. We are extremely excited to see what the community comes up with using these avatar screens.

Our first iteration of the video avatars is shipping with the webcam as-is in the near future, but as you can see in the photo above, we’re experimenting with filters (hello, green screen!) to make video avatars even more personalized. Using filters remains in the prototype stage, and we will continue how we can explore how we can incorporate it into Hubs over the coming year. Keep an eye out for featured video avatars landing on hubs.mozilla.com soon!

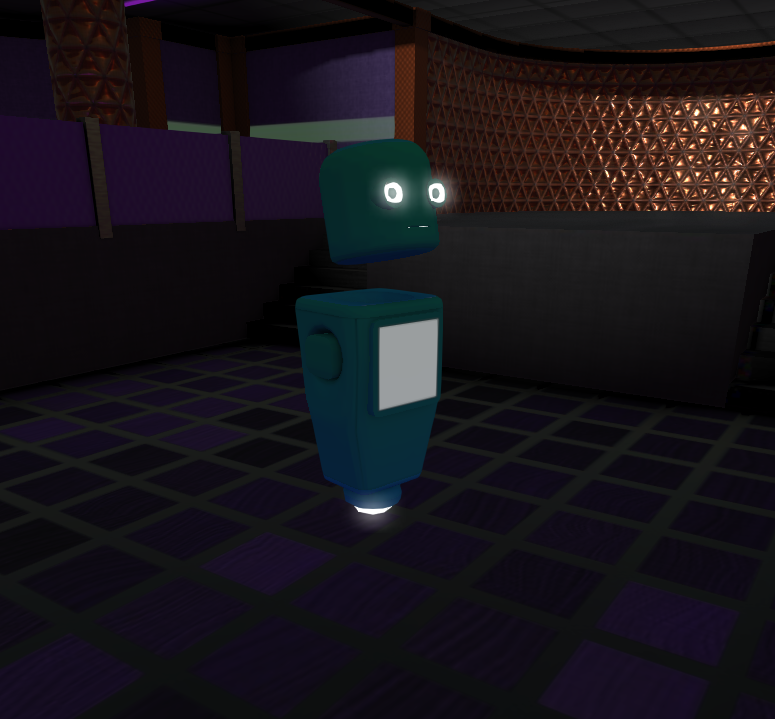

Web content in a room? Calling in to Hubs from Zoom? Finally adding lighting bloom?

A long-term goal of ours with Hubs is to make the platform easily extensible, so that more types of content can be shared in a 3D space. We had two hack week projects explore this in more detail, one specifically focused on the experience of calling into a Zoom room from Hubs and one that focused more on providing a general-purpose solution for sharing 2D web content. Extensibility is also the reason we’re able to build features like bloom (thanks, glTF!) so you can get an effect like the one pictured on the robot’s eyes below.

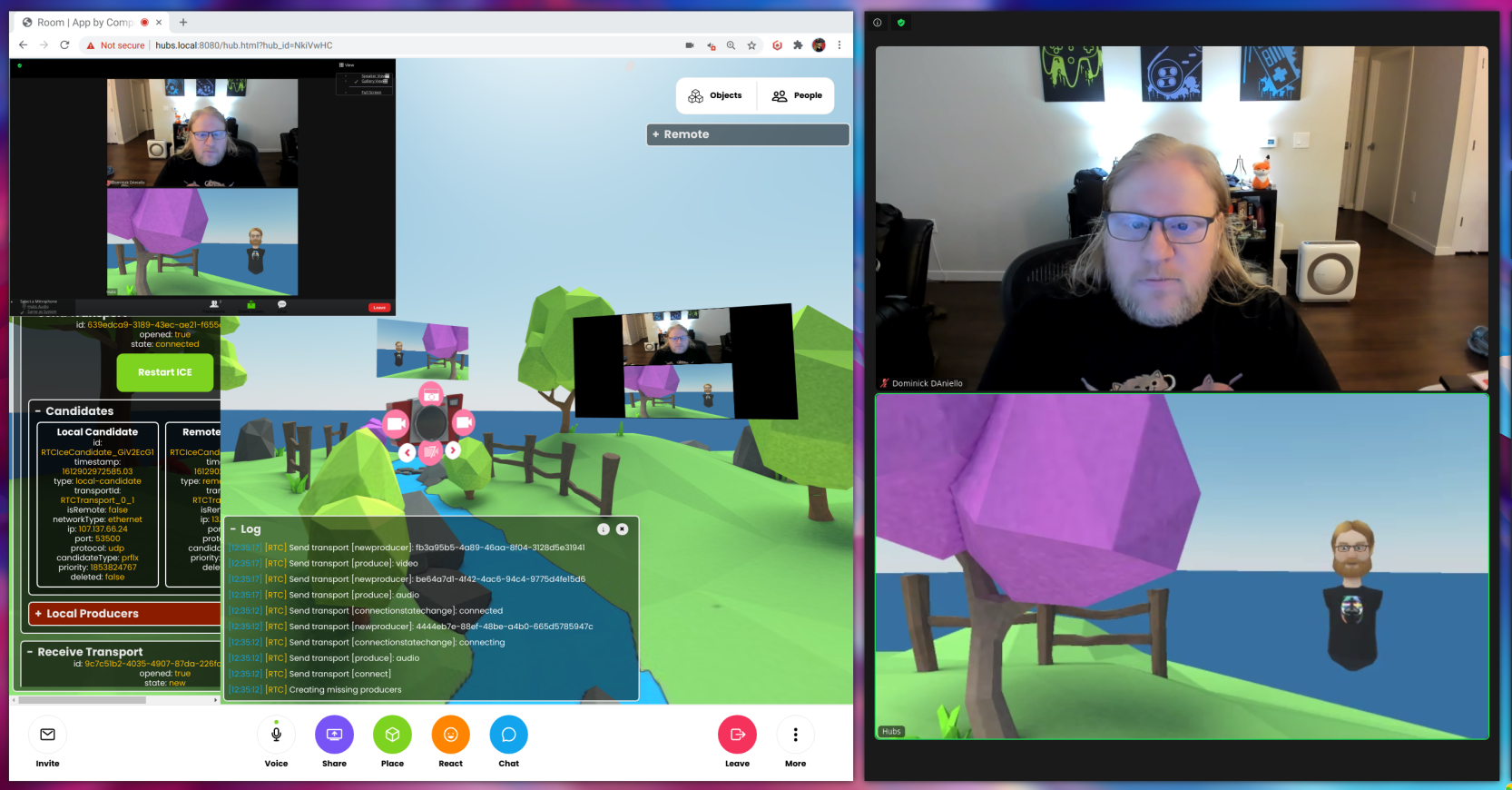

Video conferencing + Hubs

One of our goals with the recent redesign was to make it easier to use Hubs from different devices - to meet people where they are. We took this a step further by extending that mindset to meeting people where they were meeting: Zoom! While some of our team members have played around with using external tools to make a Hubs window show up as a virtual camera feed for video calls, one engineer took it a step further and brought Zoom directly into Hubs by implementing a 2-way portal between apps. Pretty cool! While we probably won’t get around to shipping this project in the next few weeks, we’d love to hear your feedback about it to get a better sense of how you might use it with Hubs.

Embedding 2D Web Content with 3D CSS iFrames

We have a lot of interest in supporting general web content in Hubs, but (for good reason!) there are a lot of security considerations for websites having some limitations about what that looks like. One avenue that we’re exploring to do this is by using CSS to draw an iFrame window “in” the 3D space, and it shows a lot of promise. Using iFrames means that each client resolves the web content on its own, which has security benefits but comes with some tradeoffs. Applications that are already networked (like Google Docs or collaboration tools such as Figma or Miro) work great using this method, but there’s some additional work that has to be done to synchronize non-networked content.

Behind the scenes of the behind the scenes: prototyping new server features related to streams

Sometimes progress isn’t always visible, but that doesn’t mean that it’s not worth celebrating! In addition to some of the features that are easy to see, we also had the opportunity to dig into some server-side developments. One of these projects explored the possibility of streaming from Hubs directly to a third-party service by adding a new server-side feature to encode from a camera stream, and another tested out some alternative audio processing codecs. Both of these projects provided some great insight into the capabilities of the code base, and prompted some good ideas for future projects down the line.