Bringing WebXR to iOS

The first version of the WebXR Device API is close to being finalized, and browsers will start implementing the standard soon (if they haven't already). Over the past few months we've been working on updating the WebXR Viewer (source on github, new version available now on the iOS App Store) to be ready when the specification is finalized, giving developers and users at least one WebXR solution on iOS. The current release is a step along this path.

Most of the work we've been doing is hidden from the user; we've re-written parts of the app to be more modern, more robust and efficient. And we've removed little-used parts of the app, like video and image capture, that have been made obsolete by recent iOS capabilities.

There are two major parts to the recent update of the Viewer that are visible to users and developers.

A New WebXR API

We've updated the app to support a new implementation of the WebXR API based on the official WebXR Polyfill. This polyfill currently implements a version of the standard from last year, but when it is updated to the final standard, the WebXR API used by the WebXR Viewer will follow quickly behind. Keep an eye on the standard and polyfill to get a sense of when that will happen; keep an eye on your favorite tools, as well, as they will be adding or improving their WebXR support over the next few months. (The WebXR Viewer continues to support our early proposal for a WebXR API, but that API will eventually be deprecated in favor of the official one.)

We've embedded the polyfill into the app, so the API will be automatically available to any web page loaded in the app, without the need to load a polyfill or custom API. Our goal is to have any WebXR web applications designed to use AR on a mobile phone or tablet run in the WebXR Viewer. You can try this now, by enabling the "Expose WebXR API" preference in the viewer. Any loaded page will see the WebXR API exposed on navigator.xr, even though most "webxr" content on the web won't work right now because the standard is in a state of constant change.

You can find the current code for our API in the webxr-ios-js, along with a set of examples we're creating to explore the current API, and future additions to the API.These examples are available online. A glance at the code, or the examples, will show that we are not only implementing the initial API, but also building implementations of a number of proposed additions to the standard, including anchors, hit testing, and access to real world geometry. Implementing support for requesting geospatial coordinate system alignment allows integration with the existing web Geolocation API, enabling AR experiences that rely on geospatial data (illustrated simply in the banner image above). We will soon be exploring an API for camera access to enable computer vision.

Most of these APIs were available in our previous WebXR API implementation, but the former here more closely aligns with the work of the Immersive Web Community group. (We have also kept a few of our previous ARKit-specific APIs, but marked them explicitly as not being on the standards-track yet.)

A New Approach to WebXR Permissions

The most visible change to the application is a permissions API that is popped up when a web page requests a WebXR Session. Previously, the app was an early experiment and devoted to WebXR applications built with our custom API, so we did not explicitly ask for permission, inferring that anyone running an app in our experimental web browser intends to use WebXR capabilities.

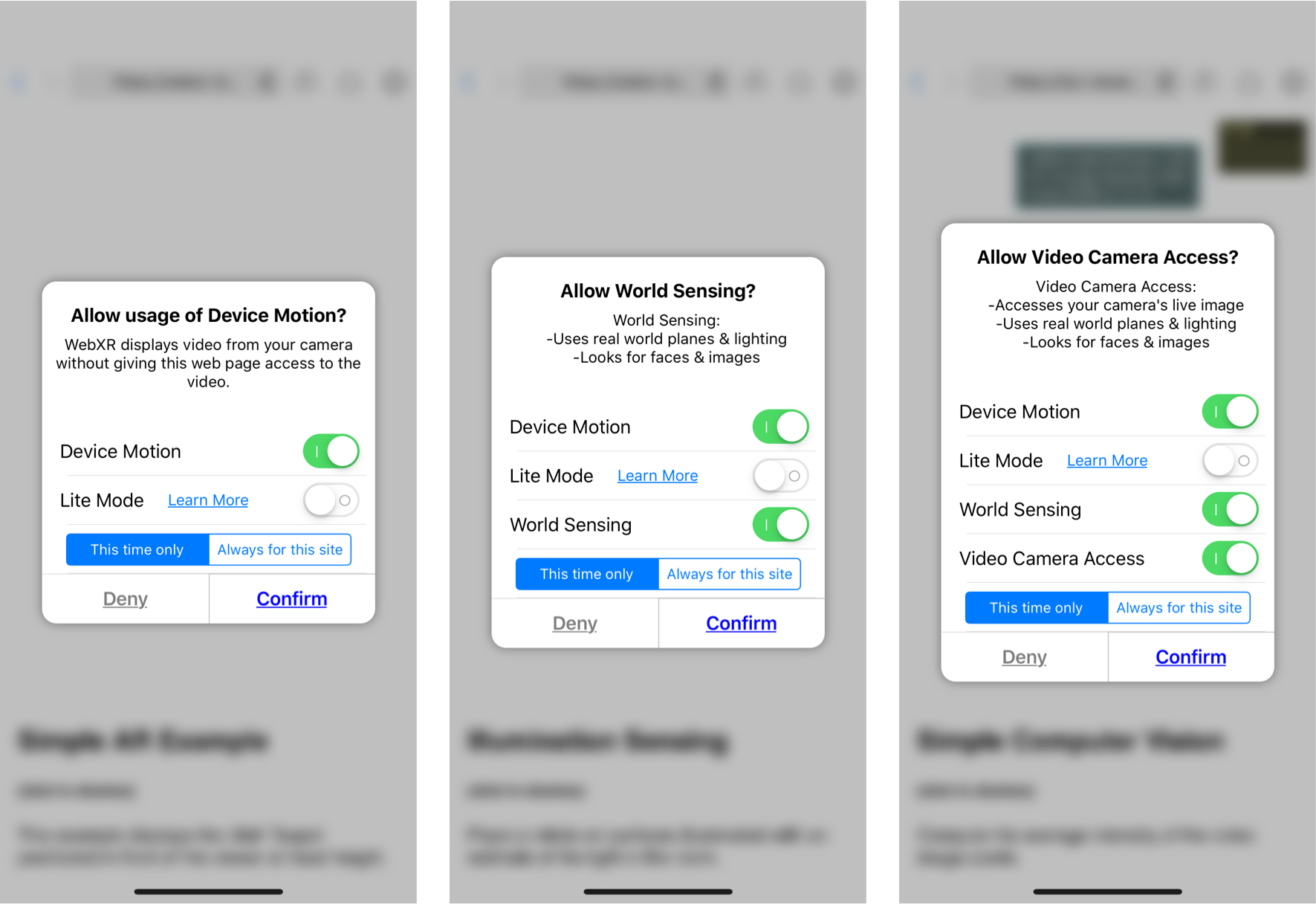

When WebXR is released, browsers will need to obtain a user's permission before a web page can access the potentially sensitive data available via WebXR. We are particularly interested in what levels of permissions WebXR should have, so that users retain control of what data apps may require. One approach that seems reasonable is to differentiate between basic access (e.g., device motion, perhaps basic hit testing against the world), access to more detailed knowledge of the world (e.g., illumination, world geometry) and finally access to cameras and other similar sensors. The corresponding permission dialogs in the Viewer are shown here.

If the user gives permission, an icon in the URL bar shows the permission level, similar to the camera and microphone access icons in Firefox today.

![]()

Tapping on the icon brings up the permission dialog again, allowing the user to temporarily restrict the flow of data to the web app. This is particularly relevant for modifying access to cameras, where a mobile user (especially when HMDs are more common) may want to turn off sensors depending on the location, or who is nearby.

A final aspect of permissions we are exploring is the idea of a "Lite" mode. In each of the dialogs above, a user can select Lite mode, which brings up a UI allowing them to select a single ARKit plane.

The APIs that expose world knowledge to the web page (including hit testing in the most basic level, and geometry in the middle level) will only use that single plane as the source of their actions. Only that plane would produce hits, and only that plane would have it's geometry sent into the page. This would allow the user to limit the information passed to a page, while still being able to access AR apps on the web.

Moving Forward

We are excited about the future of XR applications on the web, and will be using the WebXR Viewer to provide access to WebXR on iOS, as well as a testbed for new APIs and interaction ideas. We hope you will join us on the next step in the evolution of WebXR!