Firefox Reality Developers Guide

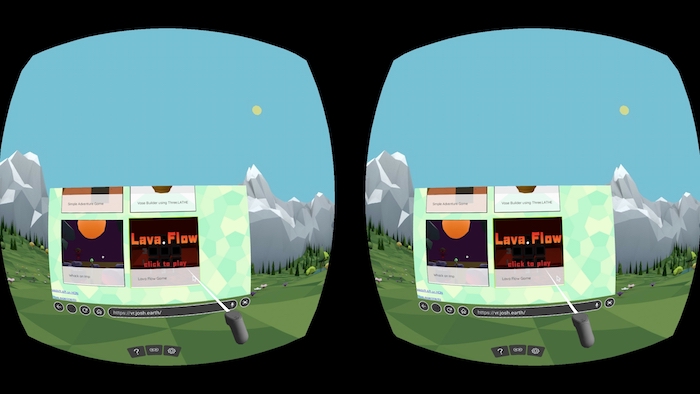

Firefox Reality, Mozilla's VR web browser, is getting closer to release; so let's talk about how to make your experiences work well in this new browser.

Use a Framework with WebVR 1.1 Support

Building WebVR applications from scratch requires using WebGL, which is very low level. Most developers use some sort of a library, framework, or game engine to do the heavy lifting for them. These are some commonly used libraries that support WebVR.

three.js

As of June 2018 three.js has new and improved WebVR support. It should just work. See these official examples of how to use it.

A-Frame

A-Frame is a framework built on top of three.js that lets you build VR scenes using an HTML-like syntax. It is the best way to get started with VR if you have never used it before.

Babylon

Babylon.js is an open source high-performance 3D engine for the web. Since version 2.5 it has full WebVR support. This doc explains how to use the WebVRFreeCamera class.

Amazon Sumerian

Amazon’s online Sumerian tool lets you easily build VR and AR experiences, and obviously supports WebVR out of the box.

PlayCanvas

PlayCanvas is a web-first game engine, and it supports WebVR out of the box.

Existing WebGL applications

If you have an existing WebGL application you can easily add WebVR support. This blog covers the details.

No matter what framework you use, make sure it supports the WebVR 1.1 API, not the newer WebXR API. WebXR will eventually be a full web standard but no browser currently ships with non-experimental support. Use WebVR for now, and in the future a polyfill will make sure existing applications continue to work once WebXR is ready.

Optimize Like it’s the Mobile Web, Because it is.

Developing for VR headsets is just like developing for mobile. Though some VR headsets run attached to a desktop with a beefy graphics card, most users have a mobile device like a smartphone or dedicated headset like the Oculus Go or Lenovo Mirage. Regardless of the actual device, rendering to a headset requires at least twice the rendering cost of a non-immersive experience because everything must be rendered twice, one for each eye.

To help your VR application be fast, keep the draw-call count to a minimum. The draw call count matters far more than the total polygons in your scene, though polygons are important as well. Drawing 10 polygons 100 times is far slower than 100 polygons 10 times.

Lighting also tends to be expensive on mobile, so use fewer lights or cheaper materials. If a lambert or phong material will work just as well as a full PBR material (physically based rendering), go for the cheaper option.

Compress your 3D models as GLB files instead of glTF. Decompression time is about the same but the download time will be much faster. There are a variety of command-line tools to do this, or you can use this web based tool by SBtron. Just drag in your files and get back a GLB.

Always use powers-of-2 texture sizes and try to keep textures under 1024 × 1024. Mobile GPUs don’t have nearly as much texture memory as desktops. Plus big textures just take a long time to download. You can often use 512 or 128 for things like bump maps and light maps.

For your 2D content, don’t assume any particular screen size or resolution. Use good responsive design practices that work well on a screen of any shape or size.

Lazy load your assets so that the initial experience is good. Most VR frameworks have a way of loading resources on demand. three.js uses the DefaultLoadingManager. Your goal is to get the initial screen up and running as quickly as possible. Keep the initial download to under 1MB if at all possible. A fast loading experience is one that people will want to come back to over and over.

Prioritize framerate over everything else. In VR having a high framerate and smooth animation matters far more than the details of your models. In VR the human eye (and ear) are more sensitive to latency, low framerates, skipped frames, and janky animation than on a 2D screen. Your users will be very tolerant of low-polygon models and less-realistic graphics as long as the experience is fun and smooth.

Don’t do browser sniffing. If you hardcode in detection for certain devices, your code may work today, but will break as soon as a new device comes to market. The WebVR ecosystem is constantly growing and changing and a new device is always around the corner. Instead check for what the VR API actually returns, or rely on your framework to do this for you.

Assume the controls could be anything. Some headsets have a full 6DoF controller (six degrees of freedom). Some are 3DoF. Some are on a desktop using a mouse or on a phone with a touchscreen. And some devices have no input at all, relying on gaze-based interaction. Make sure your application works in any of these cases. If you aren’t able to make it work in certain cases (say, gaze-based won’t work), then try to provide a degraded-view-only experience instead. Whatever you do, don’t block viewers if the right device isn’t available. Someone who looks at your app might still have a headset, but just isn’t using it right now. Provide some level of experience for everyone.

Never enter VR directly on page load unless coming from a VR page. On some devices the page is not allowed to enter VR without a user interaction. On other devices audio may require user interaction as well. Always have some sort of a 2D splash page that explains where the user is and what the interaction will be, then have a big ‘Enter VR’ button to actually go into immersive mode. The exception is if the user is coming from another page and is already in VR. In this case you can jump right in. This A-Frame doc explains how it works.

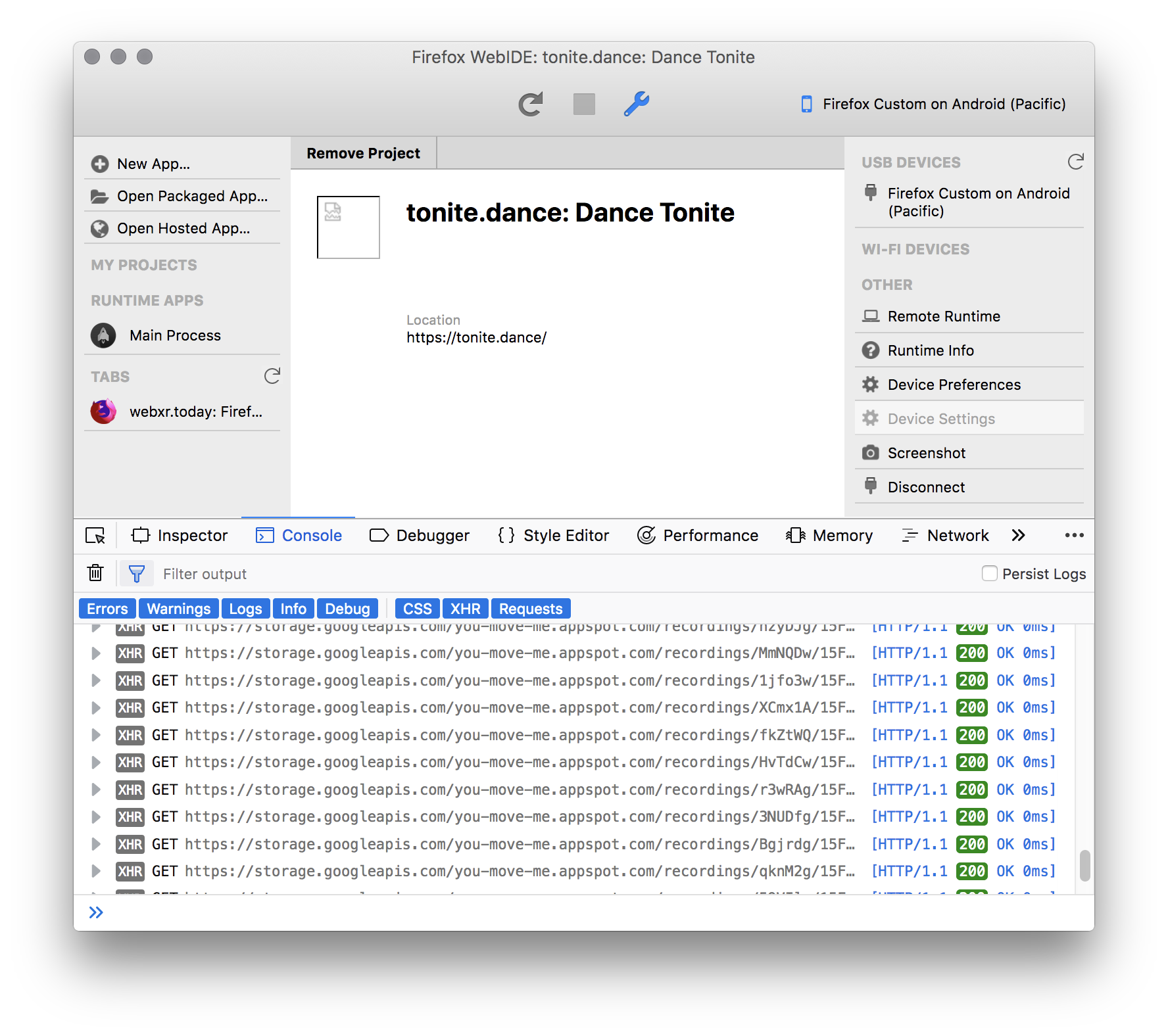

Use Remote Debugging on a Real Device

The real key to creating a responsive and fun WebVR experience is debugging on both your desktop, a phone, and at least one real VR headset. Using a desktop browser is fine during development, but there is no substitute for a actual device strapped to your noggin. Things which seem fine on desktop will be annoying in a real headset. The FoV (Field of View) of headsets is radically different than a phone or desktop window, so different things may be visible in each. You must test across form factors.

The Oculus Go is fairly easy to acquire and very affordable. If you have a smartphone also consider using a plastic or cardboard viewer, which are cheap and easy to find.

Firefox Reality supports remote debugging over USB so you can see the performance and console right in your desktop browser.

Get your Site Featured

If you have a cool creation you want to share, let us know. We can help you get great exposure for your VR website. Submit your creation to this page. You must put in an email address or we can’t contact you back. To qualify your website must at least run in WebVR in Firefox Reality on the Oculus Go. If you don’t have one to test with then please contact us to help you test it.

Your site must support some sort of VR experience without having to create an account or pay money. Asking for money/account for a deeper experience is fine, but it must provide some sort of functionality right off the bat (intro level, tutorial, example, etc.).

The Future is Now

We are so excited that the web is at the forefront of VR development. Our goal is to help you create fun and successful VR content. This post contains a few tips to help, but we are sure you will come up with your own tips as well. Happy coding.