Browsing from the Edge

We are currently seeing two changes in computing: improvements in network bandwidth and latency brought on by the deployment of 5G networks, and a large number of low-power mobile devices and headsets. This provides an opportunity for rich web experiences, driven by off-device computing and rendering, delivered over a network to a lightweight user agent.

As we’ve improved our Firefox Reality browser for VR headsets and the content available on the web kept getting better, we have learned that the biggest things limiting more usage are the battery life and compute capabilities of head-worn devices. These are designed to be as lightweight, cool, and comfortable as possible - which is directly at odds with hours of heavy content consumption. Whether it’s for VR headsets or AR headsets, offloading the computation to a separate high-end machine that renders and encodes the content suitable for viewing on a mobile device or headset can enable potentially massive scenes to be rendered and streamed even to low-end devices.

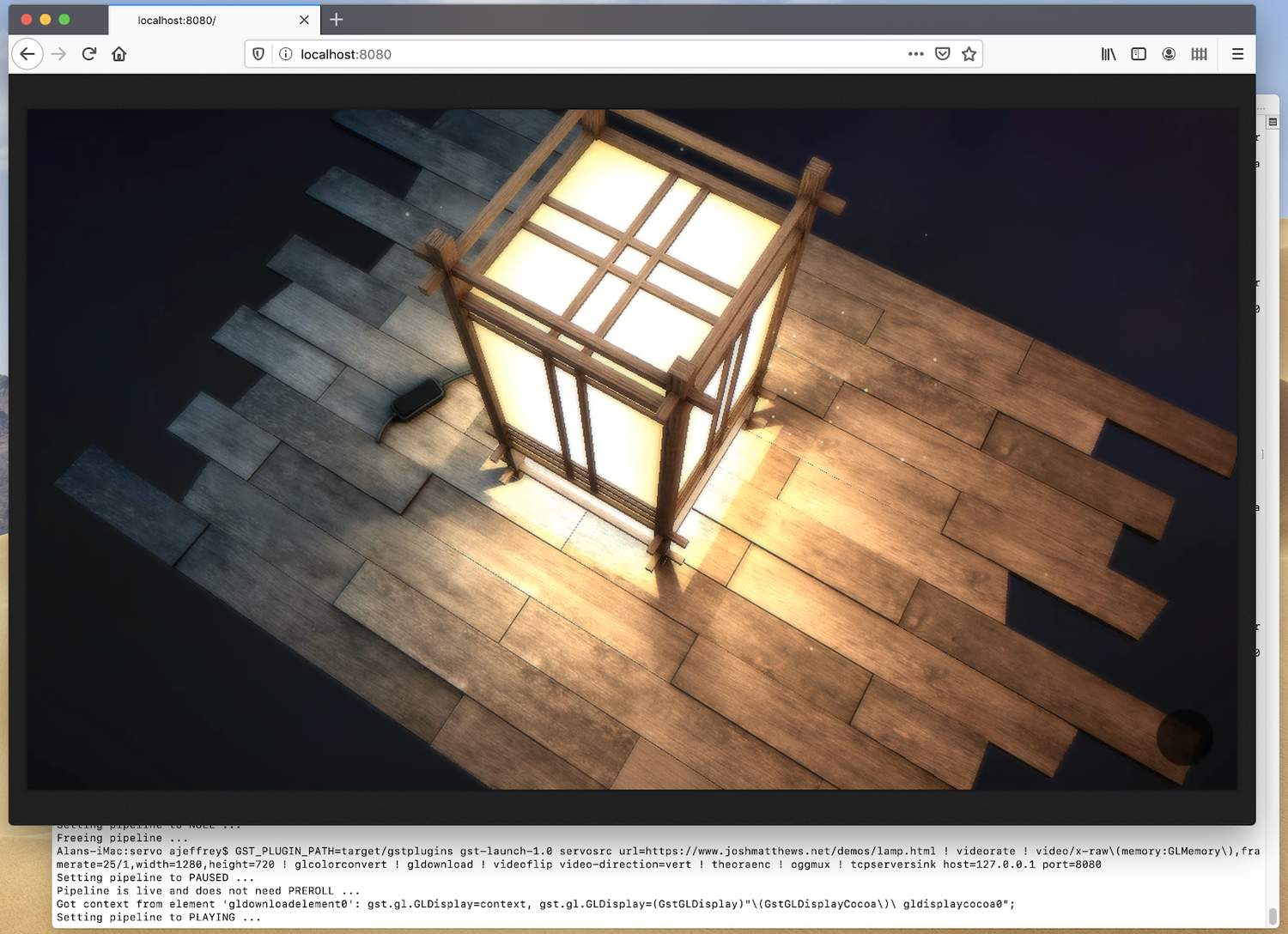

Mozilla’s Mixed Reality team has been working on embedding Servo, a modern web engine which can take advantage of modern CPU and GPU architectures, into GStreamer, a streaming media platform capable of producing video in a variety of formats using hardware-accelerated encoding pipelines. We have a proof-of-concept implementation that uses Servo as a back end, rendering web content to a GStreamer pipeline, from which it can be encoded and streamed across a network. The plugin is designed to make use of GPUs for hardware-accelerated graphics and video encoding, and will avoid unnecessary readback from the GPU to the CPU which can otherwise lead to high power consumption, low frame rates, and additional latency. Together with Mozilla’s Webrender, this means web content will be rendered from CSS through to streaming video without ever leaving the GPU.

Today, the GStreamer Servo plugin is available from our Github repo, and can be used to stream 2D non-interactive video content across a network. This is still a work in progress! We are hoping to add immersive, interactive experiences, which will make it possible to view richer content on a wide set of mobile devices and headsets. Contact mr@mozilla.com if you’re looking for specific support for your hardware or platform!